Shannon AI Pentester Explained

Your vulnerability scanner just flagged 347 issues. How many are actually exploitable? If history is any guide, a fraction of them—maybe fewer. Security teams have wrestled with this problem for years: traditional scanners generate mountains of alerts, but a huge number of those findings turn out to be theoretical risks that no attacker could actually exploit in practice. The result is alert fatigue, wasted engineering hours, and a false sense of security. Shannon, an open-source autonomous AI pentester developed by Keygraph, takes a fundamentally different approach. Rather than flagging what might be vulnerable, Shannon proves what is vulnerable—executing real exploits against running applications and only reporting findings backed by a working proof-of-concept.

This deep dive covers what Shannon is, how it works under the hood, what it can and cannot do, and why its "No Exploit, No Report" philosophy marks a meaningful shift in how organizations can approach application security testing.

Shannon at a Glance: Definition and Core Purpose

Shannon is an autonomous, white-box AI pentester for web applications and APIs. It analyzes your source code, identifies attack vectors, and executes real exploits to prove vulnerabilities before they reach production. Keygraph developed it, and it's available both as an open-source tool on GitHub under the AGPL-3.0 license and as part of Keygraph's broader enterprise Security and Compliance Platform.

Think of it as an automated red teamer. Shannon reads your application's source code to understand its structure—routes, authentication logic, data flows—then uses browser automation and command-line tools to launch real attacks against the running application and its APIs. Those attacks span injection attacks (SQL injection, command injection), authentication bypass, Server-Side Request Forgery (SSRF), Cross-Site Scripting (XSS), and other critical vulnerability classes from the OWASP Top 10.

The defining rule is simple: No Exploit, No Report. If Shannon cannot produce a working proof-of-concept exploit for a vulnerability, that vulnerability stays out of the final report. The architecture is designed for minimal false positives through this "no exploit, no report" policy. This design decision goes straight at the chronic false-positive problem that plagues traditional Static Application Security Testing (SAST) and Dynamic Application Security Testing (DAST) tools.

How Shannon Works: Architecture and Workflow

Shannon runs through a multi-agent pipeline where specialized AI agents handle different phases of the penetration testing process. The system is powered by Anthropic's Claude models—including Haiku, Sonnet, and Opus variants—and uses a multi-agent architecture orchestrated by Temporal, according to reporting by Cyber Press. It integrates standard security tools like Nmap, Subfinder, and Schemathesis to augment its AI-driven analysis.

Phase 1: Reconnaissance

The first phase maps the attack surface. Shannon digs into the application's source code to identify routes, authentication mechanisms, and data handling patterns. At the same time, it scans the live application using tools like Nmap and WhatWeb to enumerate endpoints and parameters. This dual approach—reading the code and probing the running application—gives Shannon a comprehensive picture of where potential weaknesses might exist, as described by GBHackers.

Phase 2: Vulnerability Analysis

With the attack surface mapped, parallel agents analyze the code for specific vulnerability classes. Each agent zeroes in on a particular category—one might hunt for SQL injection patterns while another searches for XSS vectors. These agents generate hypotheses about which vulnerabilities are likely present and exploitable. According to GBHackers, this phase draws on the agents' understanding of common vulnerability patterns across the OWASP Top 10 categories.

Phase 3: Exploitation

Here is where Shannon diverges most sharply from traditional scanners. Dedicated "Exploit Agents" take the hypotheses from the previous phase and try to validate them by executing real attacks against the running application. As reported by GBHackers, this includes bypassing authentication mechanisms, injecting payloads, and attempting to exfiltrate data. The agents use browser automation and command-line tools to carry out these attacks, mimicking the techniques a skilled human pentester would employ.

Phase 4: Reporting

A final agent compiles confirmed findings into a structured report. Critically, this agent throws out any "hallucinated" or unproven vulnerabilities—findings where the AI suspected a flaw but couldn't successfully exploit it. According to GBHackers, only vulnerabilities with a verified, working proof-of-concept make it into the final output. Each reported vulnerability comes with evidence of successful exploitation, giving security teams immediate, actionable intelligence.

The "No Exploit, No Report" Philosophy

Shannon's core principle is blunt: a vulnerability without a working exploit isn't worth reporting. This philosophy targets one of the most persistent pain points in application security—the overwhelming volume of unverified alerts that conventional tools produce.

Traditional SAST tools analyze source code for patterns that could indicate vulnerabilities, but they can't confirm whether those patterns are actually exploitable in the context of a running application. Traditional DAST tools probe running applications but often lack the source code context needed to craft sophisticated, multi-step exploits. Shannon bridges this gap. It uses code analysis to inform its attacks and live exploitation to confirm its findings.

The practical impact is significant. As noted in a review by Help Net Security, the security community has praised Shannon for reducing "alert fatigue." By proving exploits, it eliminates the time wasted validating false positives from traditional scanners. One reviewer quoted by Help Net Security captured the difference well: "Shannon doesn't say 'this login looks weak'; it bypassed the login... and handed me the screenshots."

This approach does carry a trade-off. While it dramatically cuts false positives, it can produce false negatives—vulnerabilities that exist but that Shannon's AI agents fail to exploit. According to Help Net Security, the underlying large language models can sometimes get stuck in loops or misinterpret complex application logic, leading to missed vulnerabilities. The "No Exploit, No Report" rule is a filter for quality, not a guarantee of completeness.

Performance and Benchmark Results

Shannon's effectiveness goes beyond theory. According to the project's GitHub repository, Shannon Lite achieved a 96.15% success rate—completing 100 out of 104 exploits—on the hint-free, source-aware variant of the XBOW benchmark, a standard for evaluating automated vulnerability discovery tools. As noted by Cyber Press, this score significantly outperforms many traditional automated tools, which often struggle with complex, multi-step exploits.

Real-World Testing Results

Beyond benchmarks, Shannon has been tested against well-known deliberately vulnerable applications that the security community uses for training and tool evaluation:

- OWASP Juice Shop: According to both Cyber Press and GBHackers, Shannon identified over 20 critical flaws in this deliberately vulnerable application, including full authentication bypass and database exfiltration.

- OWASP crAPI: Shannon found more than 15 critical issues involving JWT (JSON Web Token) attacks, as reported by Cyber Press and GBHackers.

These results show Shannon handling the kinds of complex, chained exploits that typically demand human expertise—multi-step attacks where one vulnerability gets leveraged to enable exploitation of another.

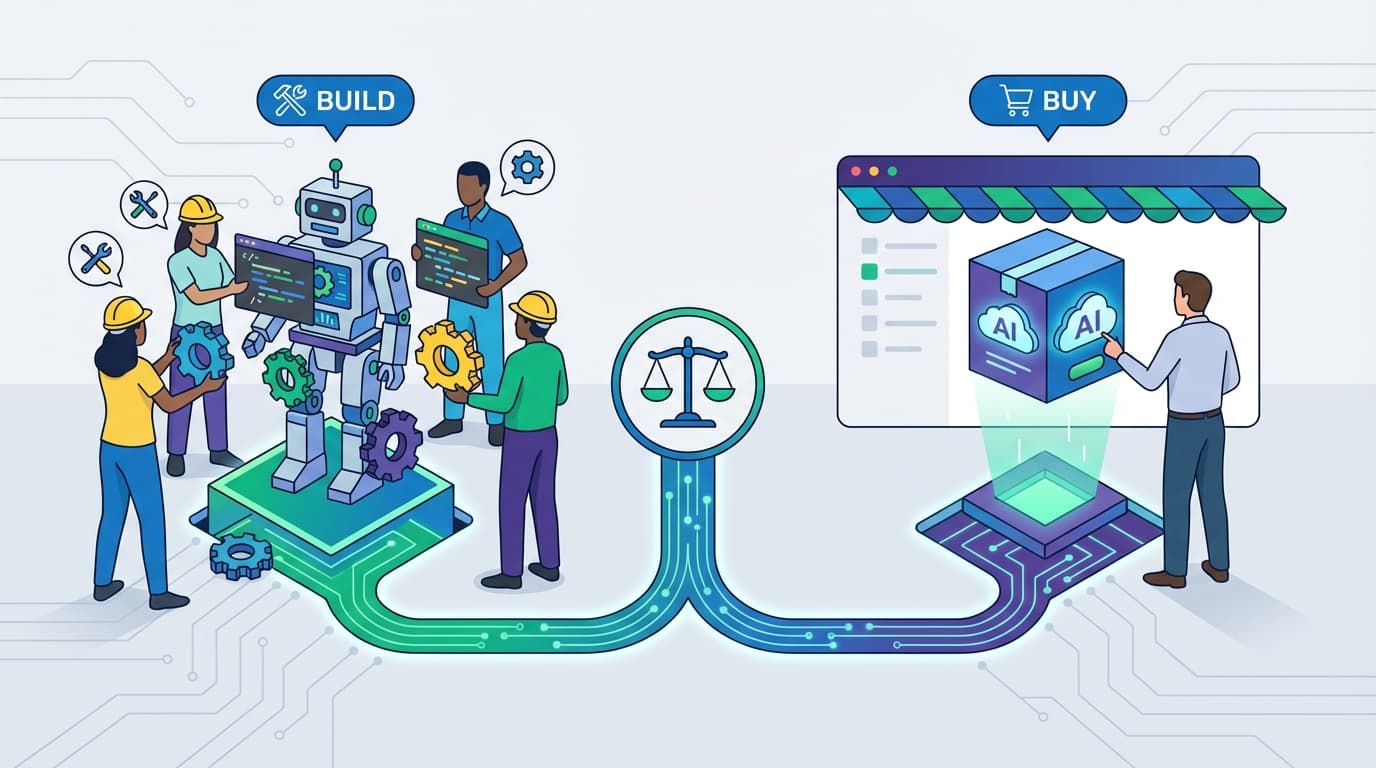

Editions and Pricing: Shannon Lite vs. Shannon Pro

Keygraph offers Shannon in two primary tiers, each targeting different use cases and organizational sizes.

Shannon Lite (Open Source)

Shannon Lite is the open-source edition available on GitHub under the AGPL-3.0 license. It targets security researchers, developers, and small teams who want to integrate autonomous penetration testing into their development workflows.

- License: AGPL-3.0 (free to use and modify)

- Deployment: Self-hosted via Docker

- Cost: The software itself is free, but users must provide their own Anthropic API key. According to ByteIota, a typical run costs approximately $50 in API credits.

That $50-per-run cost deserves context. According to Help Net Security, while this is significantly cheaper than a human penetration test (which can cost $5,000 or more), it's notably more expensive than traditional static scanners, which are effectively free to run repeatedly. The cost reflects the intensive use of large language model inference required to power Shannon's multi-agent pipeline.

Shannon Pro (Enterprise)

Shannon Pro is the enterprise edition offered as part of Keygraph's broader platform. According to the Keygraph pricing page, it starts at $50 per user per month, billed annually, with a minimum of 10 users.

- Bundled Features: The enterprise "Keygraph Plan" bundles Shannon with SAST, Software Composition Analysis (SCA), secrets scanning, and compliance automation for frameworks like SOC 2 and HIPAA, according to the project's GitHub repository and the Keygraph pricing page.

- Data Privacy: Shannon Pro offers a "self-hosted runner" model where source code never leaves the customer's infrastructure, according to the GitHub repository. This addresses a critical concern for enterprises that cannot send proprietary code to third-party services.

Technology Stack and AI Foundation

Shannon's AI capabilities are built on Anthropic's Claude family of models, using the Claude Agent SDK to power its specialized agents. According to Cyber Press, the system uses multiple Claude model tiers—Haiku, Sonnet, and Opus—likely assigning different models to different tasks based on complexity and cost trade-offs.

Temporal, a workflow engine, handles the multi-agent orchestration and manages coordination between Shannon's various specialized agents. This architecture lets different agents work in parallel during vulnerability analysis and hand off findings sequentially during exploitation and reporting.

Shannon also integrates a suite of established security tools that are standard in the penetration testing community:

- Nmap: For network scanning and service enumeration

- Subfinder: For subdomain discovery

- Schemathesis: For API schema-based testing

- WhatWeb: For web technology fingerprinting

This combination of AI-driven reasoning with proven security tooling lets Shannon draw on the best of both worlds: the pattern recognition and adaptive reasoning of large language models, paired with the reliability and precision of purpose-built security scanners.

How Shannon Compares to Other AI Security Tools

Shannon is a fully autonomous, exploit-verified AI pentester available as open source (AGPL v3), and it occupies a distinct position among AI-powered security tools. According to Help Net Security, compared to tools like PentestGPT, Shannon is seen as more autonomous and "exploit-focused," whereas PentestGPT is often viewed as a copilot that requires more human guidance.

A key differentiator is Shannon's ability to run "headless"—without human interaction—according to Help Net Security. Many AI security assistants function as intelligent aids that suggest next steps for a human operator. Shannon is designed to execute the entire penetration testing workflow autonomously, from reconnaissance through exploitation to reporting.

This distinction matters for organizations looking to scale their security testing. A copilot-style tool still requires a skilled security professional to drive the process. Shannon, by contrast, operates independently, making it potentially valuable for organizations that lack dedicated red team resources or want to run continuous security testing as part of their CI/CD pipeline.

Critical Limitations and Risks

Shannon is a powerful tool, but it comes with important limitations and risks that users need to understand before deploying it.

Never Run on Production

This is the single most critical safety consideration. According to GBHackers, because Shannon executes real, mutative exploits—including actions like dropping database tables and creating admin users—it must never be run on production environments. Shannon is strictly designed for staging or development sandboxes. Running it against a production system could cause data loss, service disruption, or unauthorized access that persists after the test.

White-Box Requirement

Shannon cannot function as a "black-box" scanner. According to GBHackers, it strictly requires source code access to map the application's structure and identify potential attack vectors. This rules it out for scenarios where source code is unavailable—testing third-party applications, vendor-supplied software, or legacy systems where the original code has been lost.

Vulnerability Coverage Gaps

According to Help Net Security, experts note that Shannon is laser-focused on specific vulnerability classes, primarily the OWASP Top 10, and may miss business logic flaws or configuration issues that don't fit its pre-trained patterns. A human pentester might notice that a particular business workflow allows unauthorized fund transfers through a sequence of legitimate-seeming actions—the kind of nuanced, context-dependent flaw that Shannon's current architecture isn't designed to detect.

AI Hallucination and False Negatives

While the "No Exploit, No Report" policy effectively eliminates false positives, it doesn't address the risk of false negatives. According to Help Net Security, the underlying LLMs can get stuck in loops or misinterpret complex application logic, causing Shannon to miss vulnerabilities that a human tester would catch. Shannon should be viewed as a powerful complement to human security expertise, not a complete replacement.

Ideal Use Cases for Shannon

Given its capabilities and limitations, Shannon is best suited for specific scenarios in the application security lifecycle.

- Pre-release security validation: Running Shannon against a staging environment before a major release can catch exploitable vulnerabilities that static analysis missed.

- Supplementing manual pentests: Shannon can serve as a first pass before engaging expensive human pentesters, letting the human team focus on business logic flaws and complex attack chains that Shannon might miss.

- Developer security education: The detailed proof-of-concept exploits in Shannon's reports help developers understand exactly how their code can be attacked, making the findings far more impactful than abstract scanner warnings.

- Continuous security for resource-constrained teams: Organizations that can't afford regular manual penetration tests can use Shannon to maintain a baseline level of exploit-verified security testing.

Getting Started with Shannon

For teams interested in trying Shannon, the open-source Shannon Lite edition is available on GitHub under the AGPL-3.0 license. According to ByteIota, deployment is handled via Docker, and users need to provide their own Anthropic API key to power the AI agents.

The basic requirements are straightforward: access to the target application's source code, a running instance of the application in a non-production environment, and an Anthropic API key with sufficient credits. The multi-agent pipeline handles the rest autonomously, delivering a report of verified, exploitable vulnerabilities.

For enterprise teams that need additional capabilities like compliance automation, secrets scanning, and SCA, Shannon Pro is available through Keygraph's platform starting at $50 per user per month billed annually, according to the Keygraph pricing page.

The Bigger Picture: What Shannon Signals About AI in Security

Shannon reflects a broader trend in application security: the shift from detection to verification. For years, the security industry optimized for finding as many potential issues as possible, producing tools that prioritize recall (catching everything) over precision (only reporting real issues). Shannon inverts this priority, optimizing for precision by requiring proof of exploitability.

This approach aligns with a growing recognition that the bottleneck in application security isn't finding vulnerabilities—it's triaging and fixing them. When a scanner produces hundreds of alerts, most of them theoretical, the practical result is often that none get fixed promptly. When a tool produces a handful of findings, each backed by a working exploit and screenshots, the urgency and clarity are immediate.

Shannon is not a silver bullet. Its white-box requirement, OWASP Top 10 focus, and potential for false negatives mean it cannot replace a comprehensive security program. But as one component of a layered security strategy—complementing static analysis, manual code review, and periodic human penetration testing—it offers something genuinely new: autonomous, exploit-verified security testing that cuts through the noise and delivers actionable proof.

Need AI-powered automation for your business?

We build custom solutions that save time and reduce costs.